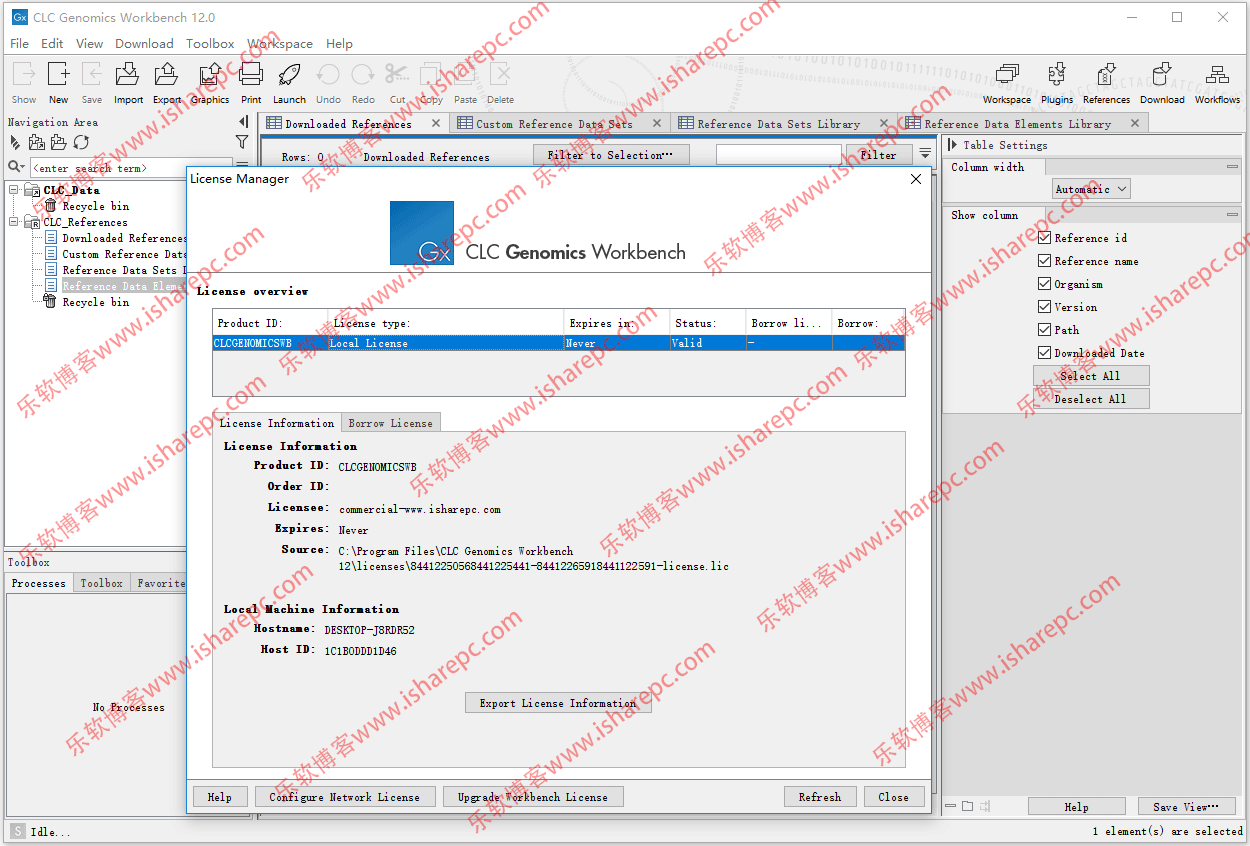

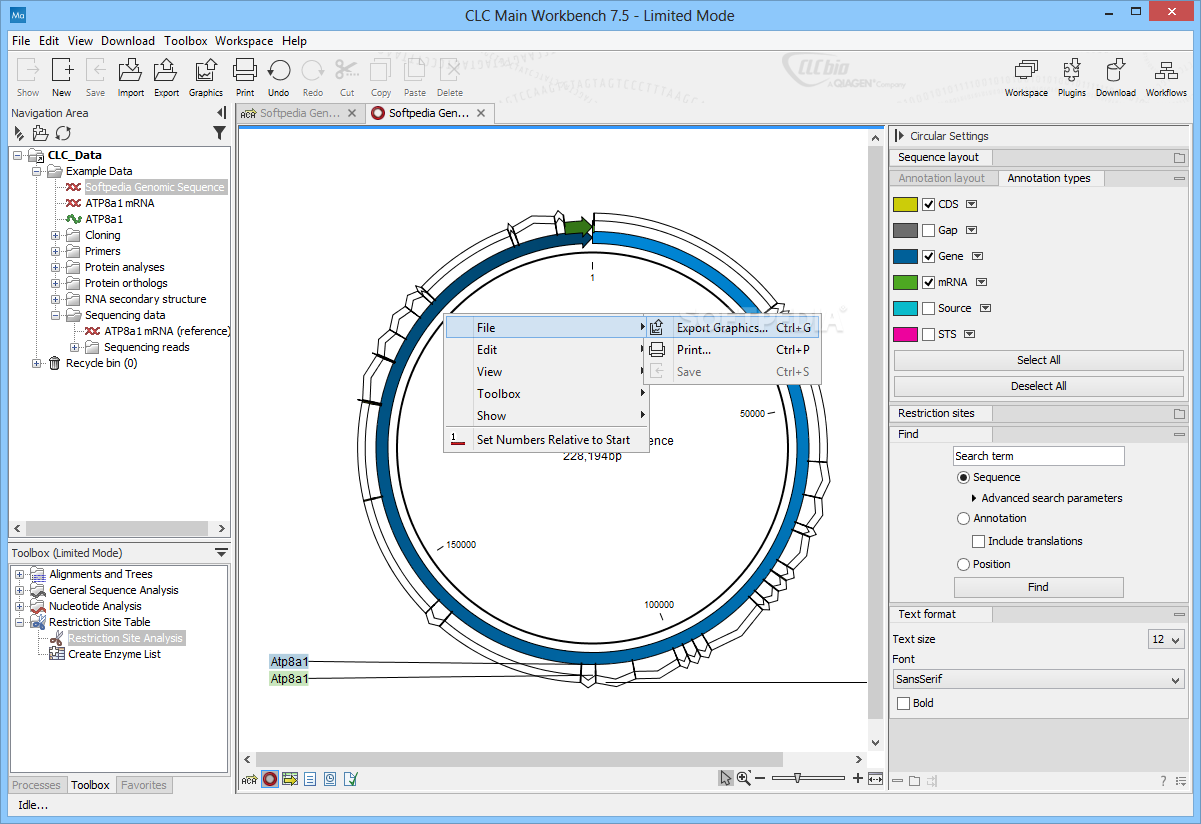

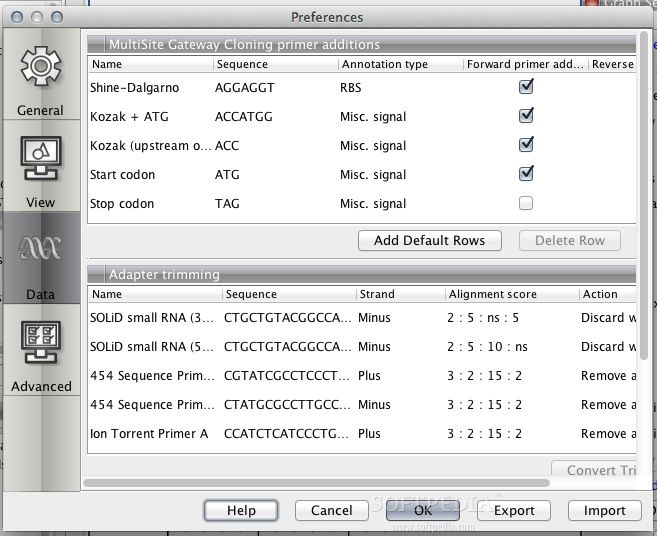

Rachael Auch - Systems Facing Research Cyberinfrastructure Support/Communications Network Analyst. Luke Gassman - Systems Facing Research Cyberinfrastructure Support/Communications Network Analyst. Research Compute and Data Application Specialist - Open position, active search process. Kevin Brandt - Assistant Vice President for Research Cyberinfrastructure.Ĭhad Julius - Director of Research Cyberinfrastructure.Īnton Semenchenko - Research High-Performance Computer Specialist (Research Facilitator). Storage and data flow planning solution services are available to enable researchers to securely store and share data in a collaborative environment. Please, reach out to our team at for more information on performance acceleration of your computational pipeline. Differently from GPU, the CPU acceleration takes advantage of the latest generation processors and employs the combination of the smart compilers on the specific CPU platform, both within the cluster and stand-alone parallel processing servers. Latest GPU technology is available both within the cluster environment and stand-alone servers. Hardware acceleration resourcesīesides commonly found parallel computing with processes and threads, HPC cyberinfrastructure offers GPU and CPU-centric performance accelerators. Please see Getting Connected for compute on-boarding instructions. Prairie Thunder and Iris servers are two additional standalone nodes that use the same storage as "Thunder" so moving between the two is seamless.Īccess to the research nodes requires a cluster account. Iris server has 160 cores (with HT), 3TBytes of memory with 4 NVIDIA V100 GPU's connected via NVLink that supports our Artificial Intelligence and other GPU workloads.Prairie Thunder (PT) server offers 160 cores (with Hyper Threading) and 3TBytes of RAM for non-cluster applications like CLC Genomics Workbench.Most recently, several servers were added to provide access to bioinformatics applications and GPU applications, for example These servers provide access to resources that span all disciplines across campus. Research computing supports over 100 Linux and Windows servers in addition to the Roaring Thunder cluster. Two common scenarios for cluster use are high-throughput, where different data sets are run on many nodes or processors at once, with each job processing different data, and parallel computing, where a single job is split up and run on many nodes at once. Thunder resources are managed by SLURM, an open-source simple Linux utility for resource management.Ī job is run on a worker node by submitting the script file to the SLURM scheduler, where the job is queued for the next available node(s) necessary to run the job.

From this system, one can run small test jobs and develop the submission script files necessary to run on the worker nodes of the cluster. Roaring Thunders' login node, rt., is the main development and job submission node of the cluster. The larger nodes are the specialty nodes (GPU or high memory), the main enclosures for the compute nodes (4 nodes per enclosure) are near the bottom of both racks. In the picture shown, the 1.5 PB DDN GPFS parallel file system is at the top of the rack on the left. RCi currently manages the largest HPC platform within the SDBOR system for use by research and education throughout the state. The Research Cyberinfrastructure (RCi) team at South Dakota State University, offers support for high-performance computing (HPC) and high-velocity research data transfer services within the South Dakota Board of Regents (SDBOR).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed